Chen states that while material small amounts policies from Facebook, Twitter, and others was successful in removing a few of the most apparent English-language disinformation, the system typically misses out on such material when it remains in other languages. That work rather needed to be done by volunteers like her group, who tried to find disinformation and were trained to pacify it and decrease its spread. “Those systems implied to capture specific words and things do not always capture that dis- and false information when it remains in a various language,” she states.

Google’s translation services and innovations such as Translatotron and real-time translation earphones utilize expert system to transform in between languages. However Xiong discovers these tools insufficient for Hmong, a deeply intricate language where context is exceptionally crucial. “I believe we have actually ended up being actually contented and depending on sophisticated systems like Google,” she states. “They declare to be ‘language available,’ and after that I read it and it states something completely various.”

( A Google representative confessed that smaller sized languages “position a harder translation job” however stated that the business has actually “purchased research study that especially benefits low-resource language translations,” utilizing artificial intelligence and neighborhood feedback.)

All the method down

The obstacles of language online exceed the United States– and down, rather actually, to the underlying code. Yudhanjaya Wijeratne is a scientist and information researcher at the Sri Lankan believe tank LIRNEasia. In 2018, he began tracking bot networks whose activity on social networks motivated violence versus Muslims: in February and March of that year, a string of riots by Sinhalese Buddhists targeted Muslims and mosques in the cities of Ampara and Kandy. His group recorded “the searching reasoning” of the bots, catalogued numerous countless Sinhalese social networks posts, and took the findings to Facebook and twitter. “They ‘d state all sorts of good and well-meaning things– essentially canned declarations,” he states. ( In a declaration, Twitter states it utilizes human evaluation and automated systems to “use our guidelines impartially for all individuals in the service, no matter background, ideology, or positioning on the political spectrum.”)

When gotten in touch with by MIT Innovation Evaluation, a Facebook representative stated the business commissioned an independent human rights evaluation of the platform’s function in the violence in Sri Lanka, which was released in Might 2020, and made modifications in the wake of the attacks, consisting of employing lots of Sinhala and Tamil-speaking material mediators. “We released proactive hate speech detection innovation in Sinhala to assist us faster and successfully determine possibly breaking material,” they stated.

” What I can do with 3 lines of code in Python in English actually took me 2 years of taking a look at 28 million words of Sinhala”

Yudhanjaya Wijeratne, LIRNEasia

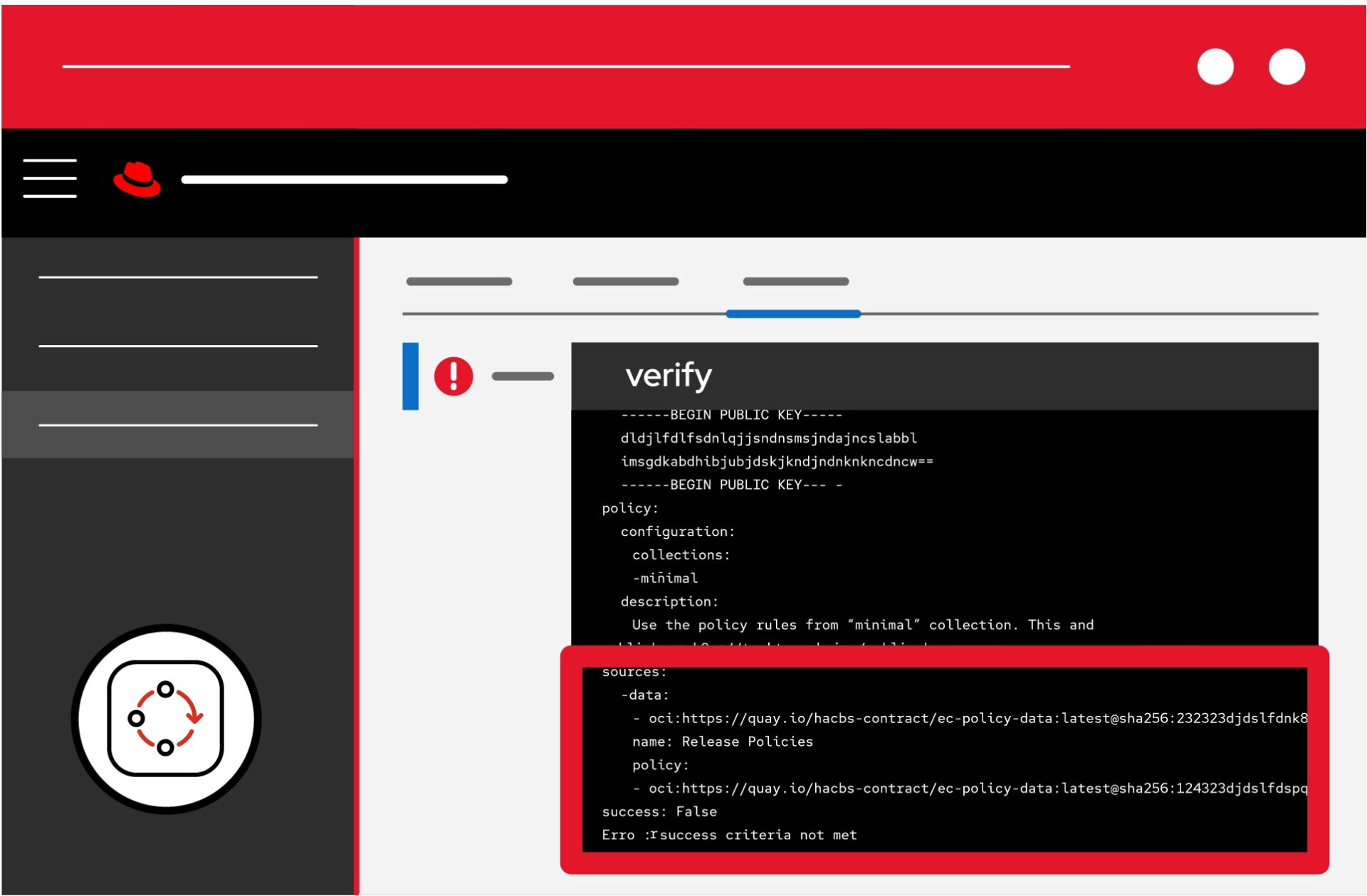

When the bot habits continued, Wijeratne grew doubtful of the platitudes. He chose to take a look at the code libraries and software application tools the business were utilizing, and discovered that the systems to keep an eye on hate speech in the majority of non-English languages had actually not yet been constructed.

” Much of the research study, in reality, for a great deal of languages like ours has actually just not been done yet,” Wijeratne states. “What I can do with 3 lines of code in Python in English actually took me 2 years of taking a look at 28 million words of Sinhala to develop the core corpuses, to develop the core tools, and after that get things as much as that level where I might possibly do that level of text analysis.”

After suicide bombers targeted churches in Colombo, the Sri Lankan capital, in April 2019, Wijeratne constructed a tool to evaluate hate speech and false information in Sinhala and Tamil. The system, called Guard dog, is a complimentary mobile application that aggregates news and connects cautions to incorrect stories. The cautions originate from volunteers who are trained in fact-checking.

Wijeratne worries that this work goes far beyond translation.

” A number of the algorithms that we consider approved that are typically mentioned in research study, in specific in natural-language processing, reveal exceptional outcomes for English,” he states. “And yet numerous similar algorithms, even utilized on languages that are just a couple of degrees of distinction apart– whether they’re West German or from the Love tree of languages– might return totally various outcomes.”

Natural-language processing is the basis of automatic material small amounts systems. Wijeratne released a paper in 2019 that analyzed the disparities in between their precision in various languages. He argues that the more computational resources that exist for a language, like information sets and websites, the much better the algorithms can work. Languages from poorer nations or neighborhoods are disadvantaged.

” If you’re developing, state, the Empire State Structure for English, you have the plans. You have the products,” he states. “You have whatever on hand and all you need to do is put this things together. For every single other language, you do not have the plans.

” You have no concept where the concrete is going to originate from. You do not have steel and you do not have the employees, either. So you’re going to be sitting there tapping away one brick at a time and hoping that possibly your grand son or your granddaughter may finish the task.”

Ingrained problems

The motion to supply those plans is called language justice, and it is not brand-new. The American Bar Association explains language justice as a “structure” that protects individuals’s rights “to interact, comprehend, and be comprehended in the language in which they choose and feel most articulate and effective.”

/cdn.vox-cdn.com/uploads/chorus_asset/file/21869417/akrales_200904_4160_0216.0.jpg)